The rise of AI deepfakes has created a strange new problem: people now struggle to prove they are real. What once sounded like science fiction is now a real-world challenge affecting everyday conversations and global leaders alike.

To test this idea, one writer conducted a simple experiment. He called his aunt and asked her to guess whether she was speaking to him or an AI-generated version of his voice. At first, she felt confident. The voice sounded natural and familiar. However, as the conversation continued, doubt crept in. She hesitated and admitted she was no longer completely sure.

This uncertainty highlights a growing issue. Deepfake technology has advanced to the point where even close family members can question what they hear. These tools can mimic tone, pauses, and emotional expression with impressive accuracy.

Most discussions about deepfakes focus on scams, misinformation, or election interference. While those risks remain serious, another concern is emerging. What happens when someone questions your identity? How do you prove you are real?

This challenge recently affected Benjamin Netanyahu. A video clip of him sparked online rumors after viewers noticed what looked like an extra finger. Many believed the footage was AI-generated and claimed he had died.

To counter these claims, he released another video. In it, he showed his hands clearly to prove nothing was unusual. Despite this effort, many people still refused to believe the footage was genuine. The situation revealed how difficult it has become to convince audiences of reality.

Experts say the original video showed normal visual distortions, such as lighting reflections. Modern AI systems rarely make simple errors like extra fingers anymore. Instead, they produce highly realistic outputs that make detection harder.

Another clue experts look for is continuity. In one clip, Netanyahu accidentally bumped a microphone, creating a sound that interrupted his speech. This type of natural interaction remains difficult for AI to replicate consistently.

Even so, proving authenticity is no longer straightforward. In everyday life, people rely on personal details or shared memories to confirm identity. One example involved a family member asking for a childhood nickname to verify a message was genuine.

However, this method does not work in public settings. Global figures cannot rely on private memories to prove their identity to millions of people. This creates a serious gap in trust.

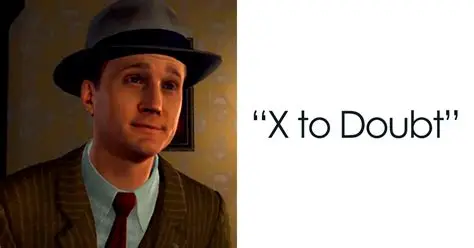

The bigger issue is not just fake content—it is doubt itself. When people start questioning everything, even real evidence loses its power. This shift could reshape how we trust media, communication, and even each other.

As AI continues to improve, the challenge will only grow. New systems may soon replicate voices, faces, and behavior with near-perfect accuracy. Society will need stronger verification tools to keep up.

Until then, one thing is clear: proving you are human is no longer as simple as it once was.